Teachers are quietly funding their own AI tools to survive administrative burnout. Cybercriminals are using those same AI tools to attack the schools they work in. These stories are connected.

The pressure on educators to do more with less has created a shadow economy of personal AI subscriptions. These tools are unvetted, unmonitored, and invisible to district IT. At the same time, the threat landscape facing schools has never been more sophisticated. AI is supercharging cyberattacks while federal cybersecurity support is being dismantled. Districts are caught in the middle: moving too slowly to give teachers what they need, while moving too slowly to protect against what's coming.

Some folks have reached out to us asking how to subscribe themselves or their colleagues to this newsletter. Click here to do so. We send a newsletter every two weeks about the latest happenings with K-12 and AI.

IN THIS ISSUE:

The hidden cost of Shadow AI — why teachers buying their own tools isn't just a budget problem, it's a security crisis.

The AI-powered cyberattack surge — what your IT team is up against, and what the loss of federal support actually means for your district.

60%

District technology leaders who say AI will lead to new forms of cyberattacks against their schools (CoSN)

51%

Educators who expect the severity of cyberattacks against their districts to increase in the next year as a result of AI (EdWeek)

33%

District leaders who have reported receiving zero cybersecurity training in the last 5 years (EdWeek)

28%

Teachers in high-AI-use schools who report a large-scale data breach, compared to 18% in low-AI-use schools (CDT)

43%

Percentage of teachers who report using their own money to purchase digital tools for their classrooms. In 2026, this is manifesting as "Shadow AI," where teachers buy personal subscriptions to AI assistants to manage administrative burnout (Gallup / Programs.com).

6 weeks

Average amount of time K-12 teachers save per school year when they regularly use AI tools for planning, grading, and administrative tasks (Gallup).

While district leaders are often the ones signing the contracts, research shows a long-standing disconnect in who actually funds classroom technology. According to Gallup, 43% of K-12 teachers report using their own money to purchase digital learning tools for their students. In the AI era, this "out-of-pocket" trend is accelerating.

As districts move cautiously to vet generative AI, teachers are bypassing procurement to buy "time" (an average of 6 weeks saved per year) with personal subscriptions. This surge in "Shadow AI" comes as teachers look for immediate relief from administrative burnout that official district roadmaps haven't yet addressed.

OUR TWO CENTS

We are witnessing the rise of the "Shadow AI Stack."

When districts move too slowly to vet and provide enterprise-grade tools, teachers don't stop using AI, they just stop telling us about it.

By paying for personal subscriptions, teachers are essentially subsidizing district operations, but they are doing so at a high cost to security and equity. A teacher in one room might have a $20 a month "Super-Assistant" that handles lesson planning and IEP drafts, while the teacher next door is drowning in manual paperwork with no budget for such a tool. This creates a "zip code effect" within the same hallway.

And the data privacy implications are real. Personal subscriptions mean student data is flowing through consumer-grade tools with no district oversight and no audit trail. Every Shadow AI subscription is an unmanaged data liability sitting inside your network.

We cannot let AI literacy become a private luxury for teachers who can afford it. Districts must move from "permitting" AI to "providing" it if they want to maintain any standard of data privacy or instructional equity.

Survey your teachers (honestly). Ask what AI tools they are already using, whether district-approved or not. You can't secure what you can't see.

Make cybersecurity part of your AI professional development. If you're training teachers on how to use AI tools, that's the moment to also train them on how those tools can be weaponized against them. Teachers who understand that a perfectly written email from "the superintendent" could be AI-generated are your first line of defense. Awareness is infrastructure.

The risk of Shadow AI isn't just about data privacy, it’s about systemic vulnerability. As teachers look for "Super-Assistant" tools, they are increasingly experimenting with “Agentic AI”: tools that don't just write text, but actually do things on your computer (managing files, browsing the web, or running scripts). A recent investigation by 1Password into a tool called OpenClaw revealed how dangerous this "agentic" era is becoming.

In these systems, users install "Skills" (simple instruction files). 1Password found that top-downloaded AI skills were actually malware delivery vehicles. These malicious skills tricked the AI (and the user) into running "required dependencies" that were actually infostealing trojans.

Once run, this malware raids the device for:

Saved browser credentials and cookies

Access to cloud drives and payroll systems

Internal district session tokens

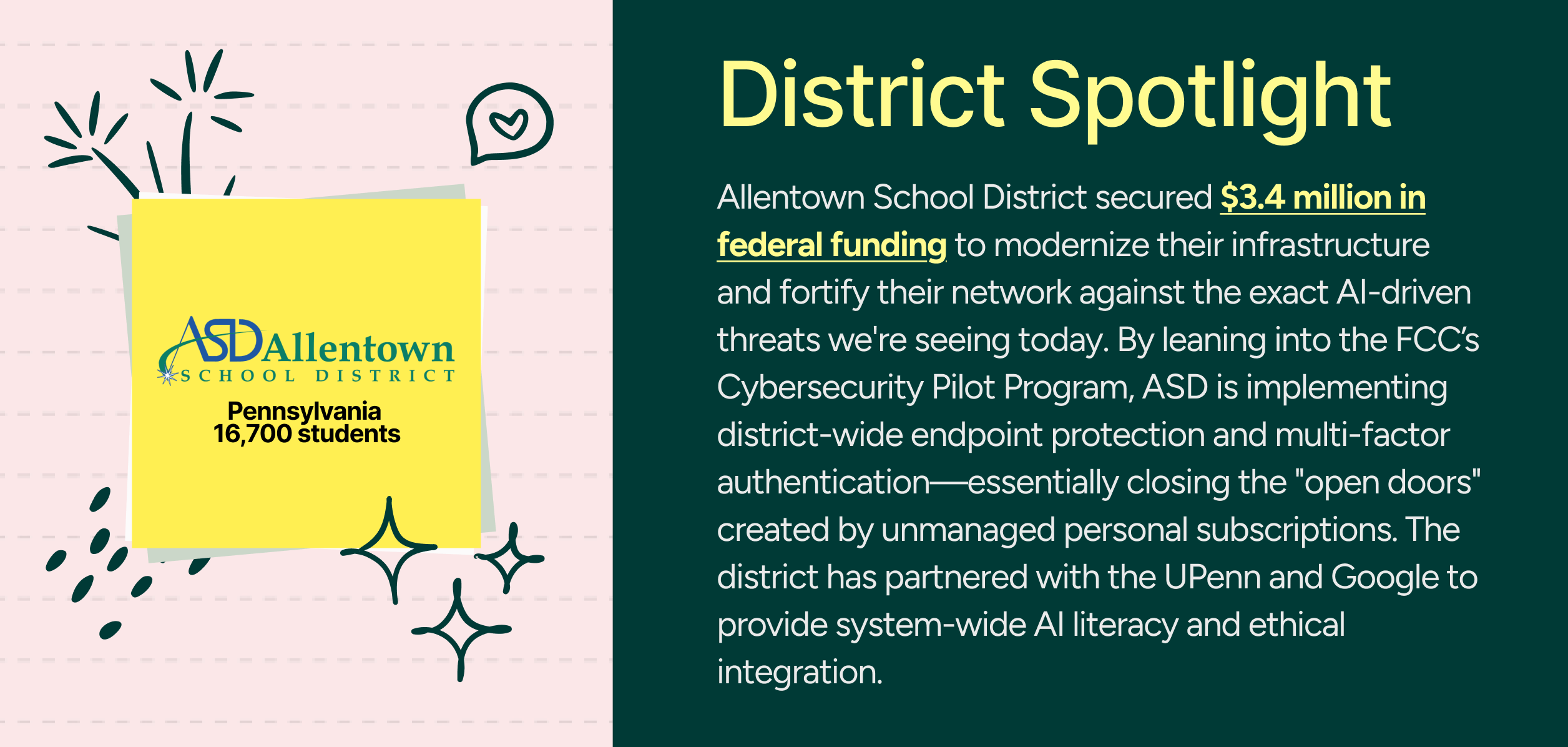

And this is happening at exactly the moment the federal government is pulling back. MS-ISAC, the primary free cybersecurity resource hub for schools, lost its federal funding. The Department of Education's Office of Educational Technology has been shuttered. The K-12 Cybersecurity Government Coordinating Council has been effectively suspended. Schools are being asked to defend against a more sophisticated threat landscape with fewer resources and less federal backup than they had two years ago (EdWeek).

OUR TWO CENTS

The schools most at risk right now are the ones that think they're fine because nothing has happened yet. Being a former K-12 CIO, I can vouch for your technology department. You’re not paranoid if they’re actually out to get you. Cyberattacks on schools aren't random; they're strategic. Children's data sells for a premium because they have clean credit histories (EdWeek).

The rise of agentic AI makes this even more urgent. We're no longer talking about AI helping a hacker write a better email. We're talking about AI autonomously scanning your network for vulnerabilities. Here is what keeps me up at night: the Shadow AI problem and the cybersecurity problem are the same problem. Every teacher using a personal subscription is an unmanaged entry point. Districts don't just need AI policies. They need visibility, and they needed it yesterday.

Treat "we haven't been attacked" as a risk signal. Complacency is the most exploited vulnerability in K-12 cybersecurity. Conduct a tabletop exercise with your leadership team — game out what a ransomware attack or deepfake wire fraud attempt would actually look like in your district.

Establish voice and identity verification protocols now. No financial transaction should ever be authorized based solely on a phone call or voicemail, regardless of who it sounds like. Create a code word system or a required callback to a known number for any payment authorization.

Run phishing simulations regularly. Fake phishing campaigns sent to staff that are followed by brief instructional videos for anyone who clicks are one of the highest-ROI investments in your cybersecurity posture.

Check your MS-ISAC status. If your district previously relied on MS-ISAC (Multi-State Information Sharing and Analysis Center — a mouthful) for free cybersecurity support, that access may have changed. Several states (Alaska, Connecticut, Kansas, Maine, Mississippi, New Jersey, Oregon, Texas, and Vermont) have statewide memberships that cover districts at no additional cost. Find out where your state stands.

Audit every AI tool accessible on school devices or connections. Consumer AI tools used without district oversight aren't just a privacy liability — they're an open door. You need to know what's running on your network before someone with bad intentions finds it first.

AI Is Not A Tech Project. It's a Leadership Issue from AI for Educators Daily with Dan Fitzpatrick (11-minute listen — Spotify or Apple Podcasts)

In this episode Dan argues that treating AI as a "side project" or a minor IT initiative is the fastest way to invite systemic failure. He insists that leadership must pivot from a "wait and see" approach to one of active, strategic investment. Dan makes it clear that while quality AI integration is expensive and complex, attempting to bypass those costs only leads to the rise of "Shadow AI"—the exact kind of unvetted, personal subscriptions that are currently hollowing out school security from the inside. This is the perfect resource for leaders who need to explain to their stakeholders why investing in enterprise-grade AI infrastructure isn't just a choice; it's the only way to protect and empower their districts in 2026.

Chat with ClassCloud

We’re listening. Let’s Talk!

This newsletter works best when it’s a conversation, not a broadcast. If you want to talk through how any of this applies to your district specifically—or if you have feedback on what would make this more helpful—just hit reply. We read and respond to everything.

Schedule a Virtual Meeting

Thanks for reading,

Russ Davis, Founder & CEO, ClassCloud ([email protected])

Sarah Gardner, VP of Partnerships, ClassCloud ([email protected])

ClassCloud is an AI company, so naturally, we use AI to polish up our content.